Hey Ronald,

yes, i think the next step is to check if the mount moves on guiding commands at all. But to be honest, even if the guiding works i have problems to find parameter to get calm guiding. Perhaps that are the limits of ALTAZ... or we have to find the right parameters ![]()

>>Attached is a picture of my equipment after a frosty observation night.

>>That setup sounds strange but

Very nice looking setup - my main camera is a Raspberry Pi sensor module too ![]()

So good luck with you tests!

Beste Grüße,

Mat

Read More...

Hey Ronald,

- indi_synscan_telescope driver,

- SynScan app and

- AZ-GTi mount?

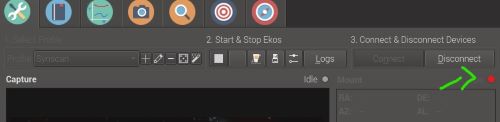

That is my exact setup! SynScan app was installed on Windows (not on a mobile device). A few times the driver was on "error" in the background and stopped working, took me a while to see it:

That little light turned red -> a simpel disconect/start/stop helped.

I hope it works for you too!

The use of the internal guider is perhaps easier for testing (no extra software).

>> I can not imagine that the app allows guiding for AZ-GTi and not for Star Discovery.

That's something I unfortunately don't know. But I could also imagine it.

What is you your Trail looking like?

Can you send a manual guide pulse (something you can check indoors)?

Regards,

Mat

Read More...

Hey Ronald,

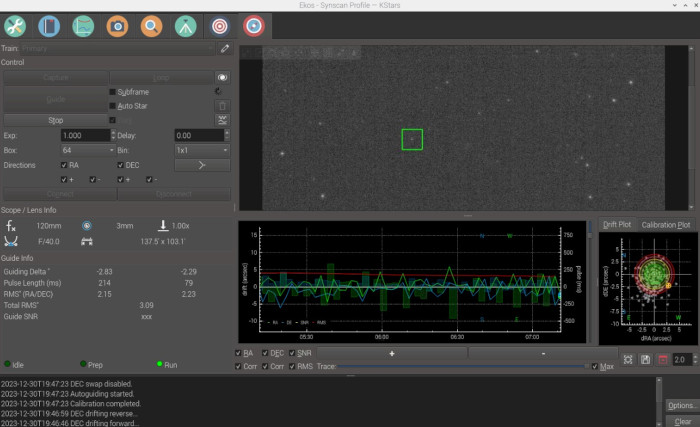

just for the record - i found the mistake (it was me ![]() ) - the trail was wrong (guiding must of course be via Synscan driver) with this setting the internal guider works as he should via Synscan app with synscan driver (see screenshot)! Guiding with PHD2 worked as well.

) - the trail was wrong (guiding must of course be via Synscan driver) with this setting the internal guider works as he should via Synscan app with synscan driver (see screenshot)! Guiding with PHD2 worked as well.

The guiding was shaky, but perhaps it is possible to find good parameters...

Regards,Mat

Read More...

Hey Ronald,

Thank you for your detailed reply.

I used the direct version of the driver a lot with my AZGTI and in the end it worked somewhat and I was able to minimize the tracking drift (see

here

). The guiding also went well in principle (both axes - also with PHD2), but I had the impression that I couldn't beat the rest of the drift (it rocked up).

That's why I'm now testing the Synscan indi driver with the synscan app. There the drift is still better (with three star alignment model) and I think it might be possible to use the guiding successfully, but as described the guiding pulses from the calibration don't seem to be transferred to the mount at all.

Regards,

Mat

Read More...

Hey Ronald,

were you successful with the guiding? I have the same issue. Calibration via synscandriver does not move the Mount. Single pulse from indisettings Guide tab seems to work.

Regards,

Mat

Read More...

Hey Nou,

thank you very much for your quick reply. I was able to compile from invent.kde.org (took three hours but worked fine).

Next time i run in to problems i will check your project!

Mat

Read More...

Hey folks,

short question: I used the KSTARS 3.6.0 (on RPI4 with raspbianOS) always from the Astroberry repo and compiled the newest INDI driver from

github.com/indilib/indi

Now i want to compile the newest KSTARS from git as well and I am a bit confused. I found:

github.com/KDE/kstars

and there in the compiling instructions it makes a git clone from:

invent.kde.org/education/kstars.git

-> so the question:

Is invent.kde.org/education/kstars.git the correct, latest and original source to clone and install kstars from?

Thank you,

Mat

Read More...

You are right, that is a good question. To be honest, I don't have the experience to say for sure. Perhaps the model with this mount just works that way in AZ mode.

In any case, it works differently than synscan, where even a few distant points work well. However, thanks to platesolve, the above method is much more convenient and faster than the synscan app. I really love kstars if it works - it's fantastic.

Read More...

Hey Folks,

I've been testing again for a few days now and would like to mark this topic as [solved/workaround]. I changed the title and I added a reference to the end of the thread in the opening post.

Thanks for all the help and input over the last month and thanks especially to michael and jasem.

Here is a short summary of what ended up being the best approach for me:

AZ-GTI altazimuth mode (in my case the x-version)

Motor firmware 3.40

Kstars 3.6.0/ INDI from github

Cam for platesolving: ASI 120MM Mini (on 120/3 Scope)

Imaging: Raspberry Pi HQ Cam (on 418/76)

1. Pointing scope (0-Level) roughly in direction of the target

2. Boot pi

3. Connect to mount

4. Change mount-settings to:

- Options-tab: 100ms

- (Options-tab: disable Logging )

- Alignment-tab: Change to inbuilt Math / Press Reload OK

- Sitemanagement-tab: check time

- Tracking-tab: All standard (but Deadzone = 4 and Offsets all 0)

KSTARS solver setting "use scale"

5. Slew upwards a bit with "mount control"

6. Solve with option (sync) and slew with goto near the target (may take a few steps)

7. On or near the target: in ekos mount tab "tracking off" - and "clear model"

6. Solve first time with option (sync) - goto target and solve again

7. Check drift. if its there - start procedure:

- slew with goto to a random point near the target and solve with option sync

- slew with goto back to the target and check drift

- repeat in a kind of a circle around the target

The drift will disappear, but sometimes it only needs 3 sync-points and sometimes (much) more. I also set a sync point every now and then or clear the model after an hour or two.

It should be noted that the drift of course never disappears completely, but in my opinion it can be reduced to the level of the original synscan app. But the extreme drift in which the target leaves the FOV in a minute can definitely be slowed down to make images with an exposure of 12 seconds or longer possible. The rest is probably hardware, field rotation, loading, leveling and all the other tweaks that are recommended in the many threads about the az-gti.

Attached here is a picture from my bright city balcony from the last three days. It really only takes 5 minutes from switching it on to getting started, which is just a lot of fun for me... next stop - darker place and clear sky! ![]()

M51 - 840 frames with 11 sec (2h 30 min in total)

Further feedback from your experiences (and perhaps optimization of the procedure) is of course always welcome!

Regards,

Mat

Read More...

Hi Peter,

thank you for your input. I'm glad to hear that the wedge helped you succeed. I have also read many positive experiences with the AZ-GTI with wedge. But AZ mode really has a lot of advantages (set up in under 5 minutes, no polaris needed), so the point of the thread was to get to the bottom of these problems.

I've had really great sessions over the last three days. It really works every time to slow down the drift using the circle procedure. It remains to be seen how much this can be optimized (how to set the sync points perfectly). It's very cloudy right now and over the last few days I've only been able to collect frames bit by bit between the clouds, but with the new method the imaging starts for me:

This was a test on M16:

700 frames a 14 sec (over 4 days between the clouds ![]() )

)

Raspberry PI HQ Camera

76/418 Scope

Balcony in a midsized City

And it's clear to me that with my skills (absolute beginner) and the Raspberry cam, that's not the top edge of the possible, but without the drift I can now take pictures, which I think is really great!

Feedback from other testers is still welcome!

Regards,

Mat

Read More...