INDI Library v2.0.7 is Released (01 Apr 2024)

Bi-monthly release with minor bug fixes and improvements

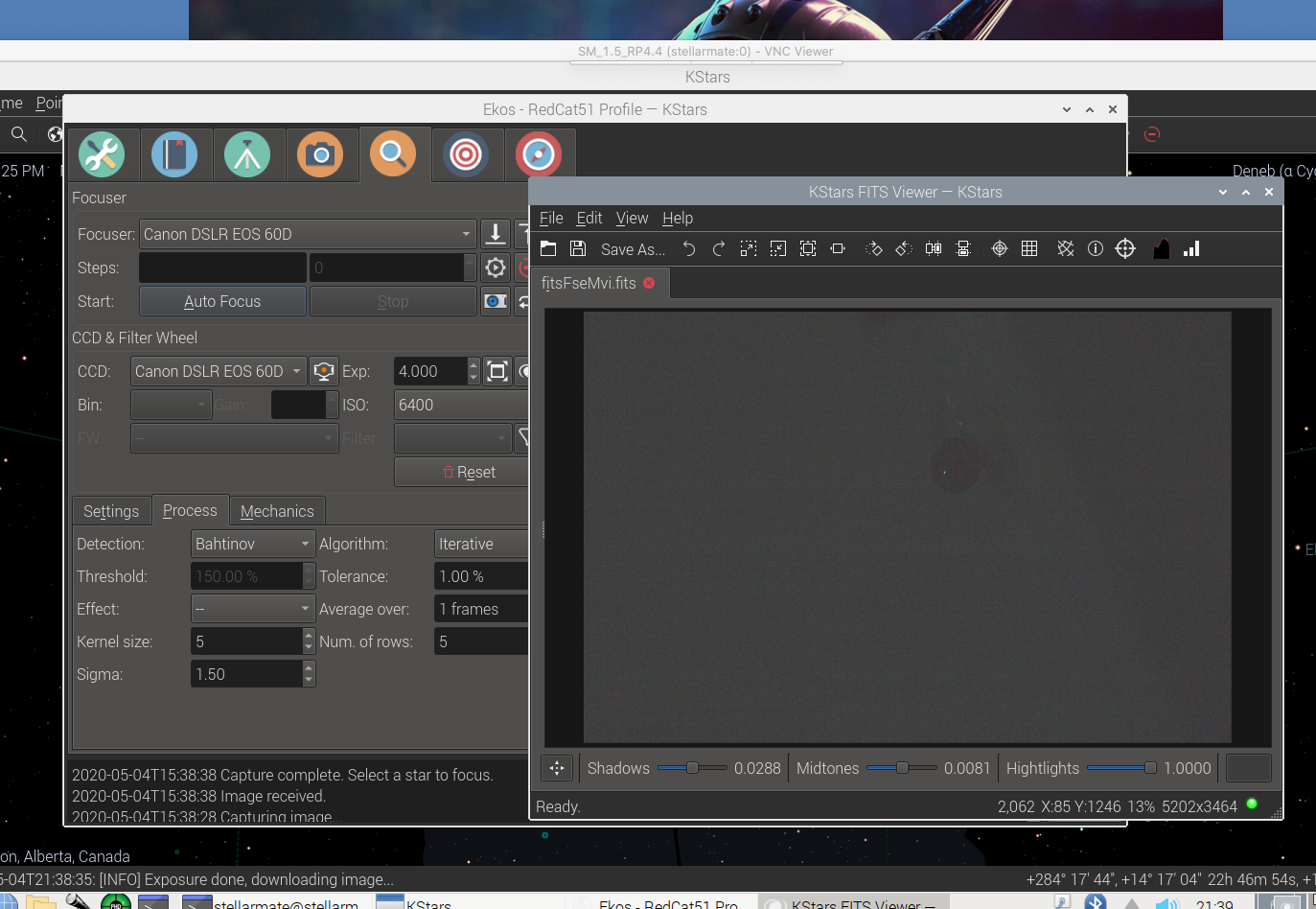

New Bahtinov Mask Assistant Tool

Replied by Patrick on topic New Bahtinov Mask Assistant Tool

Hi Wouter,

You probably don't see my changes because I have not yet merged my changes into the main branch. I have created a branch called 'bahtinov-mask-focus' in my kstars repo. If you check out this branch, then you will have my changes.

$ git branch bahtinov-mask-focus

$ git checkout bahtinov-mask-focus

$ git pull origin bahtinov-mask-focus

Here are globally the changes I made:

The in kstars/kstars/ekos/focus/focus.ui there is a combobox called focusDetectionCombo. This should have an extra value named Bahtinov Mask. In kstars/kstars/fitsviewer/fitsdata.cpp is the implementation of the bahtinov algorithm. And in kstars/kstars/fitsviewer/fitsview.cpp is the drawing of the lines on the image. Some extra parameters have been added to the Options class.

I am a little short on time this week to develop, but hope to get some time for it next week.

There is not much logging in the code, I usually test it visually by aiming at a star, with your bahtinov mask in place and then get the image in kstars. Then see if the focus module draws the right lines on top of the image.

I found out last week that that is not the case. Some lines are drawn, but they don't make sense. Probably because the lines are not detected right. It could also be that the coordinates for drawing the lines are incorrect, but I have to look into that some more. Printing the variables of the detected lines will usually help to see if the detection did work.

I have saved the images I captured and am feeding them into my example application (which I didn't share on github yet) which uses the exact same algorithm. I noticed that the example application had a really hard time recognizing the lines in the images because the image was too noisy. I have been tweaking the few parameters I have, but they seem to be quite different for each image I feed it.

So currently I am trying two things:

- add some sort of algorithm to analyse the data an determine the best values for the parameters

- implement a completely different algorithm to detect the lines in the image

- start processing the image the same way as it is done in the Canny algorithm that was already implemented, namely get the image data and apply a MEDIAN and HIGH_CONTRAST mask

- apply sobel algorithm (determine horizontal, vertical and diagonal edges)

- apply thinning algorithm (sharpen the edges)

- apply threshold (make it a 3 color image: black, 50% grey and white)

- apply hysteresis (make it a black and white image)

- apply hough transform which gives back an array of lines detected in the image

- take the 3 brightest lines and use them to determine the focus offset

.1) sort the lines in order of angle

.2) determine the intersection between the two outer lines

.3) determine the distance between the intersection of the outer lines and the middle line, that is the offset - place the data for drawing the lines in the BahtinovEdge class (derivative of Edge class used for drawing the focused stars)

- use the BahtinovEdge class to draw the lines on the image (in fitsview.cpp)

The alternative algorithm I was thinking off is the same algorithm used in the bahtinov-grabber application. Namely take the image, rotate it 180 degrees in steps of 1 degree en for each step calculate the average brightness of each horizontal line. When there is a diffraction line in the image positioned horizontally, then the average brightnes of that horizontal line will have a higher value. Store the highest value in an array.

After all rotation steps have been done, determine the three highest values in the array, these should be the three diffraction spikes of your bahtinov mask.

Then the processing continues from step 7 as described before.

I am also trying to apply a gaussian blur filter instead of the MEDIAN and HIGH_CONTRAST mask and see if that makes a difference.

I hope this information will help you on your way.

Kind regards,

AstroRunner

Please Log in or Create an account to join the conversation.

Replied by Rob on topic New Bahtinov Mask Assistant Tool

There is not much logging in the code, I usually test it visually by aiming at a star"

You might consider making a simple artificial star. As simple as a light source in a box with a pin hole into some aluminium cooking foil. Along with a short telescope like a spare finder/guider scope or even a camera you now have a controlled source without the vagueries of the weather or indeed time of day since It is important to keep FL short if you need a short subject to camera distance for use indoors for example.

Please Log in or Create an account to join the conversation.

- Wouter van Reeven

-

- Offline

- Supernova Explorer

-

- Posts: 1957

- Thank you received: 420

Replied by Wouter van Reeven on topic New Bahtinov Mask Assistant Tool

Wouter

Please Log in or Create an account to join the conversation.

- Wouter van Reeven

-

- Offline

- Supernova Explorer

-

- Posts: 1957

- Thank you received: 420

Replied by Wouter van Reeven on topic New Bahtinov Mask Assistant Tool

Please Log in or Create an account to join the conversation.

Replied by Patrick on topic New Bahtinov Mask Assistant Tool

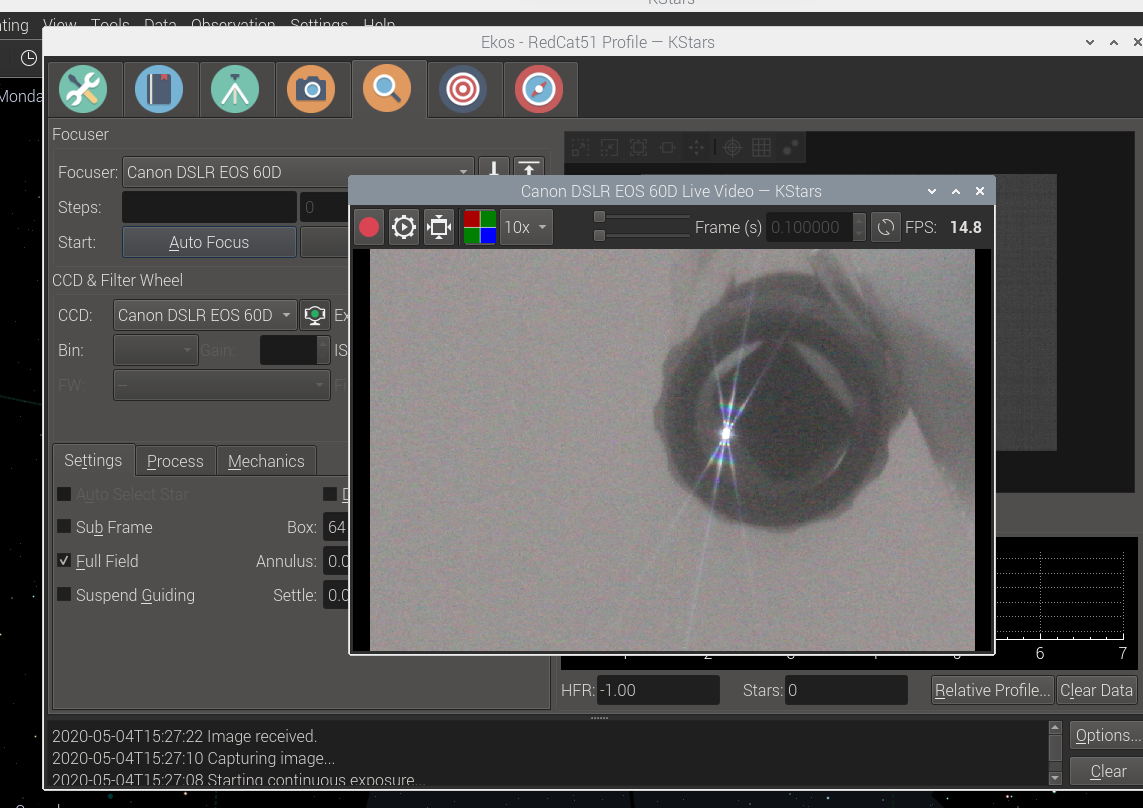

I have finally finished the implementation of the Bahtinov Mask Focus Assistant in KStars/EKOS.

It is currently (April 2020) in review and I hope it will be integrated in the official KStars software soon.

At this moment you can get the latest version of the Bahtinov Mask Focus Assistant from my forked KDE/kstars branch: github.com/prmolenaar/kstars

Just take the master branch, build it and you have the Bahtinov Mask Focus Assistant in kStars.

Good luck and have fun testing it.

Let me know if you need some adjustments, then I can pick that up.

Best regards and clear skies!

Please Log in or Create an account to join the conversation.

- David Tate

-

- Offline

- Elite Member

-

- Posts: 309

- Thank you received: 40

Replied by David Tate on topic New Bahtinov Mask Assistant Tool

I see it, but could you provide some more steps in out to get this? What to download, command lines to build? I know... if I don't know this then I pretty much should stay away.

I'm really looking forward to this feature.

1. At this time what I do, it put the mask on, select the filter I want (normally starting at the top)

2. Take a quick 5 second shot, (that loads the filter and the focuser moves to the previous night's position)

3. Click the video icon, move the video panel to the side

4. Enter the Focuser Module, make my adjustments, once the mask is correct I enter that value in a spreadsheet that calculates the offset that I enter into the "Funnel" icon.

5. I do that for each filter.

Oh and then I forget to take off that mask when I align (haha, yeah it happens more than it should)

I'll make a little video tonight showing the actions.

Please Log in or Create an account to join the conversation.

- Wouter van Reeven

-

- Offline

- Supernova Explorer

-

- Posts: 1957

- Thank you received: 420

Replied by Wouter van Reeven on topic New Bahtinov Mask Assistant Tool

Thanks @AstroRunner. I'll have a look as soon as possible.

Wouter

Please Log in or Create an account to join the conversation.

- Andrew Couture

-

- Offline

- Junior Member

-

- Posts: 31

- Thank you received: 7

Replied by Andrew Couture on topic New Bahtinov Mask Assistant Tool

Wanted to test this software change out - expected outcome to get better focus with the ray trace for feedback on accuracy.

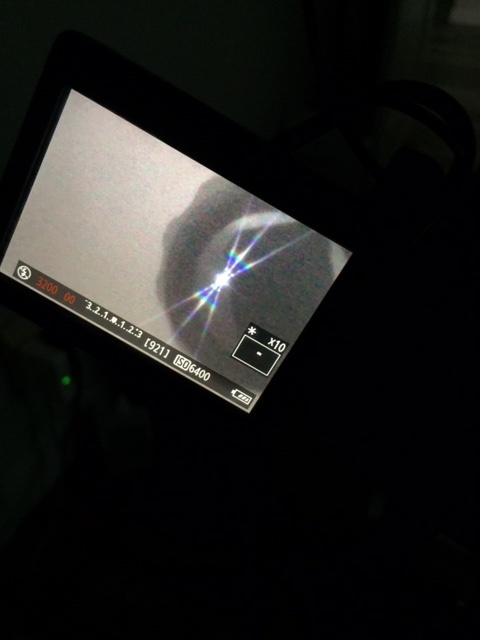

1. Used the Canon rear screen live view to get close.

2. Used Ekos's live view to check

3.Tried numerous exposure times to get the star flare - didn't see any flare up to 30 second exposure. Even maxed out ISO.

Left all settings at default. Do you have any suggestions?

I will try this again tomorrow with my 183mmPro.

Thank you for you efforts; perfect app for this OTA/camera

Sky Watcher Esprit 100ED APO

QHY5L-II Mini Guider

Pegasus PPB, DMFC

Xagyl Filter, LRGB+Ha

CGEM DX

RP4.4

William Optics RedCat 51

Star Adventurer

RP4.1

Software

KStars phd2 , Ubuntu, StarTools, Mac OS

AstroDSLR AstroGuider AstroTelescope, AstroImager, Astrometry

Please Log in or Create an account to join the conversation.

Replied by Patrick on topic New Bahtinov Mask Assistant Tool

Thanks for trying the Bathinov Mask Assistant Tool. Sorry to hear that you have problems getting a good flare. I didn't change anything to the exposure mechanism, so the fact that you have trouble getting a good flare should not be related to the Bahtinov setting. However, if it might be a result of my implementation, I sure will look into it.

You can try the following things to see if that resolves the issue:

1. Select another detection algorithm (e.g. SEP or Gradient) but leave your Bahtinov mask in place and try if you still can't get a good flare. The detection algorithm has no effect on the exposure, only on detecting a star. With another detection algorithm Ekos won't be able to detect if the focus is right, but it will show that the exposure problem is not related to the Bahtinov detection algorithm. If it does affect the exposure, then I sure will look into it. Then something must have gone wrong in my software implementation.

2. In the focus tab, in the image preview area there are several buttons. One of the buttons is the auto stretch button (a dot with 4 triangles around it). If you press that button, the image will be stretched and that will probably show the flare that you expect. If the button is unpressed, then no stretching is applied and the image looks very dim (not much stars are shown, that could probably be the cause of the absence of the flare).

I hope these answers will help. If not, let me know.

Please Log in or Create an account to join the conversation.

Replied by Patrick on topic New Bahtinov Mask Assistant Tool

Since yesterday afternoon (4th of May 2020), the Bahtinov Mask Focus Assistant have been integrated in the main branch of KDE/kstars. So it is now available for everyone and will probably be available in the next release of kstars.

Good luck using this feature. Let me know if you have any questions of encounter any problems regarding the Bahtinov Mask Focus Assistant.

Kind regards,

AstroRunner

Please Log in or Create an account to join the conversation.

- Rick Wayne

-

- Offline

- Elite Member

-

- Posts: 222

- Thank you received: 20

Replied by Rick Wayne on topic New Bahtinov Mask Assistant Tool

Please Log in or Create an account to join the conversation.

- Wouter van Reeven

-

- Offline

- Supernova Explorer

-

- Posts: 1957

- Thank you received: 420

Replied by Wouter van Reeven on topic New Bahtinov Mask Assistant Tool

Please Log in or Create an account to join the conversation.