Get Connected!

Come and join our community. Expand your network and get to know new people!

Hello,

I got a new CMOS (Omegon vtec571 = ToupTek ATR3CMOS26000KMA). The INDI Touptek (2.1) driver crashed immediately. I have generated a logfile. What can I do?

Thomas

Read More...

Hi everyone

System is Stellarmate OS 1.8.1 running on an rpi4 and using VNC to access from Macbook. First, I would like to say everything is working and I am getting good results. My concerns are with interpreting aspects of the analyze tab. Last night I was taking 30 sec. exposures with dithering every 5 exposures(one pulse dither). In the screenshot it can be seen that at dither, sometimes, but not always, movement can be seen in the ra or dec directions. These movements are not seen in the guide graph in the analyze tab by the way.Question is, why are these movements in RA or dec not seen at each dither in the analyze graph. In the red rms curve why are these movements taken into consideration to calculate rms values since guiding is actually suspended during dither. Any help would be appreciated.

Mike

Read More...

Also the INDI nightly build does not yet exist for Ubuntu 24.04 on Raspberry Pi:

Err:7 ppa.launchpadcontent.net/mutlaqja/indinightly/ubuntu noble Release

404 Not Found [IP: 2620:2d:4000:1::81 443]

Reading package lists... Done

E: The repository 'ppa.launchpadcontent.net/mutlaqja/indinightly/ubuntu noble Release' does not have a Release file.

Read More...

Ah! I bet there was some compile flag that controls this feature. Maybe it was set for the Apple silicon builds but not for Intel.

Is the original poster also on Intel?

Read More...

> I assume this happens when INDI starts and connects to the focuser. Is there any logging of what it reports?

I assume most drivers will query the focus position regularly, e.g. every second. Mine does.

If you enable driver logging for your focuser, you'll probably see these values, though I suppose that depends on the driver.

Hy

Read More...

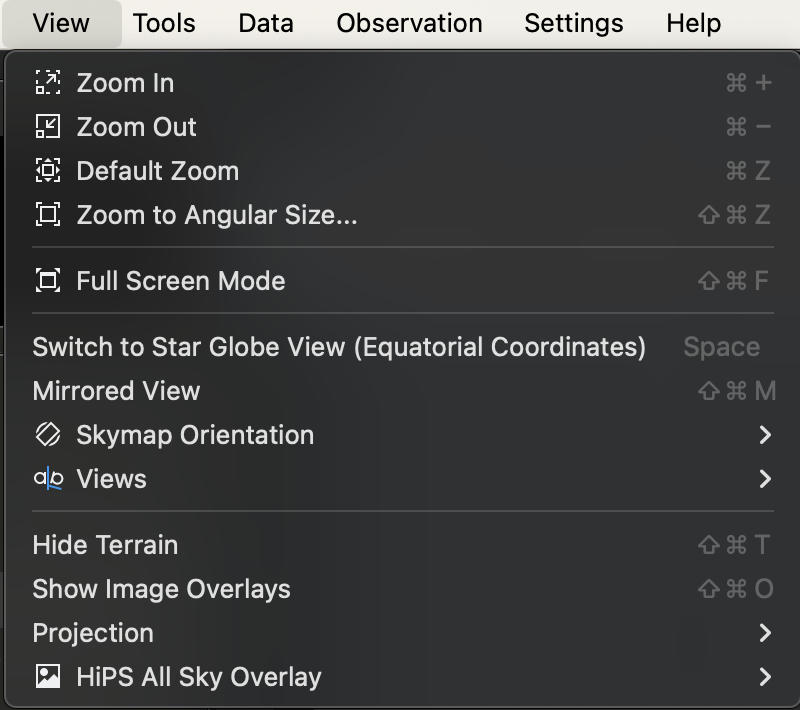

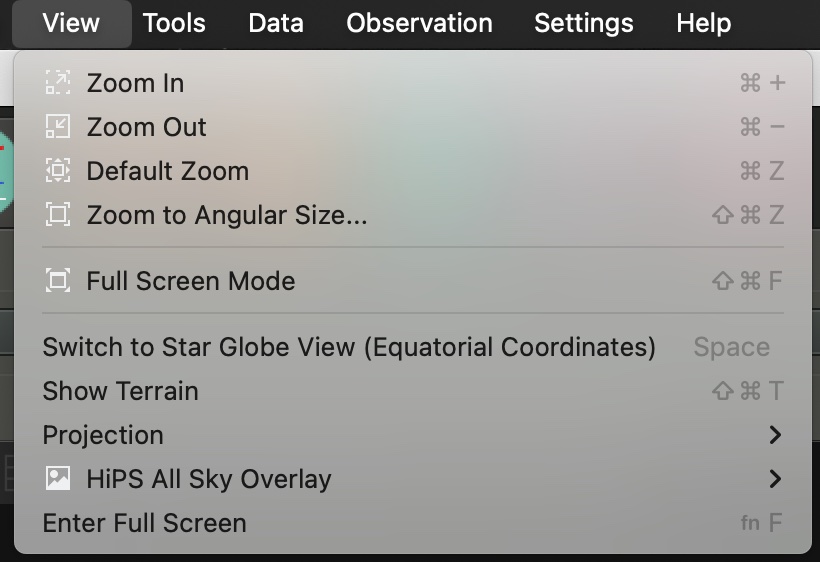

I have the exact same build on my Mac and I don't see it. What macOS are you running? I have the most recent Sonoma 14.4.1 on an Intel iMac. Here is what the View menu looks like:

Read More...

Polar alignment crashing in sm app on android latest release

Read More...

The ZWO EAF has an optional hand paddle that you can adjust the focus manually. But when you use it, it does adjust the count in the EAF itself. And that's why I have one for each of the units I have. It makes it handy whenever I want to do live viewing with the scopes also.

There may be some EAFs out there that has a clutch to disconnect from the shaft, but I don't see any way those can keep up with the number of rotations on the device with the focus assembly travel since they are not bound to each other any longer.

Not really different than when you loosen the clutch on your mount and move your scope. It may say it's pointed one location but once you moved it manually that location fix no longer applies.

Read More...

I would not suggest it right now myself.

Just tried a new install of Ubuntu 224.04LTS on one of my mini PCs.

When going to install KStars/INDI/EKOS I got a warning of

indi-full: Depdends: indi-apogee but it is not going to be installed

Depends: indi-astroasis but is not installable

E: Unable to correct probelms, you have held broken packages.

Read More...

Hmm,

I downloaded the 3.7.0 stable DMG on my Mac with a build date of Build: 2024-04-04T05:15:20Z

and I see the Views sub-menu.

Read More...

Thanks, Hy.

>>Many (perhaps most?) focusers report their position to the INDI driver, which in turn sends it to Ekos.

>>So, Ekos gets told the focuser position.

I assume this happens when INDI starts and connects to the focuser. Is there any logging of what it reports?

>>Internally, the focuser firmware will very likely do as you said: "record where it last was and imagine that it's still there".

Some focusers have the ability to be twiddled manually. How would this be accounted for?

Read More...

adding usb_max_current_enable=1 to /boot/config.txt MAYBE improved things with the mount. I just did a number of daylight GOTOs and found them generally accurate. Still no reliable joy from Camera or Focuser. Sometimes it works, sometimes it doesn't.

Read More...

Many (perhaps most?) focusers report their position to the INDI driver, which in turn sends it to Ekos.

So, Ekos gets told the focuser position.

Internally, the focuser firmware will very likely do as you said: "record where it last was and imagine that it's still there".

Hy

Read More...

Does it do some kind of query to the focuser device to learn the present position? Is there some action that can force this to happen?

Or does it just record where it last was and imagine that it's still there?

Read More...